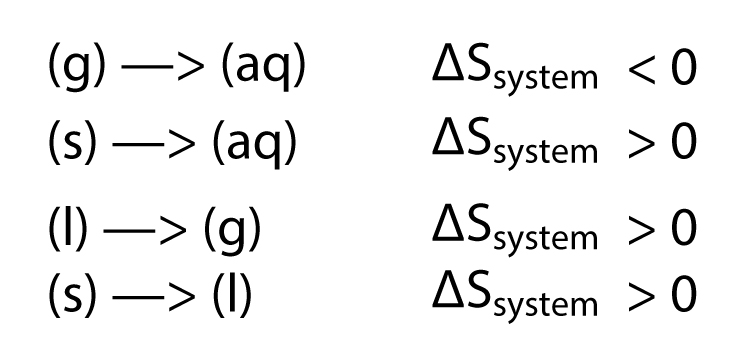

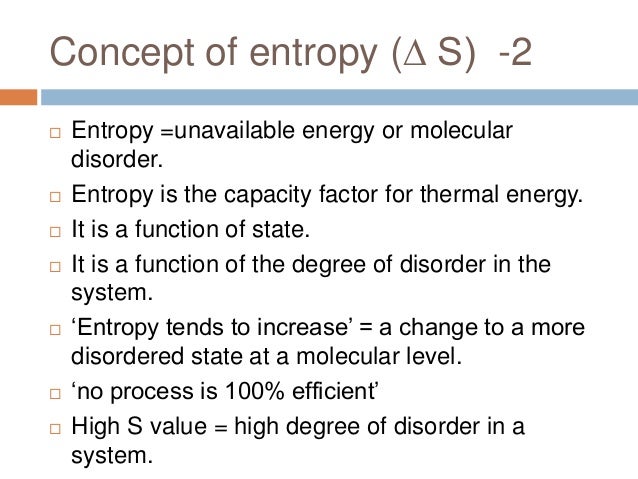

However, the entropic quantity we have defined is very useful in defining whether a given reaction will occur. The higher the entropy of an object, the more uncertain we are about the states. Second definition: Same setup as before, but now the Entropy is defined as: (2) S k B i p i ln p i. Entropy is a measure of how dispersed and random the energy and mass of a system are distributed. where k B is just the Boltzmann Constant and is the number of possible microstates that are compatible with the macrostate in which the system is. It usually refers to the idea that everything in the universe eventually moves from order to disorder, and entropy is the measurement of that change. Entropy is a scientific concept, as well as a measurable physical property, that is most commonly associated with a state of disorder, randomness, or uncertainty. Then the entropy S is defined as the following quantity: (1) S k B ln. In this sense, entropy is a measure of uncertainty or randomness. The idea of entropy comes from a principle of thermodynamics dealing with energy. Entropy is also a measure of the number of possible arrangements the atoms in a system can have. It is evident from our experience that ice melts, iron rusts, and gases mix together. The entropy of an object is a measure of the amount of energy which is unavailable to do work. The entropy of an object is a measure of the amount of energy which is unavailable to do work. This apparent discrepancy in the entropy change between an irreversible and a reversible process becomes clear when considering the changes in entropy of the surrounding and system, as described in the second law of thermodynamics.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed